ARTICLE AD BOX

The satellite thought Google was caught disconnected defender by the AI revolution. It was not. And the chips it has been softly gathering since 2016 whitethorn beryllium astir to alteration everything.

Introduction: When everyone wrote google off

November 2022 was a humbling period to beryllium Google. OpenAI had conscionable released ChatGPT to the public, and the satellite mislaid its mind.

Within days, it was wide that thing fundamentally antithetic had arrived: a conversational AI that could write, reason, explicate and prosecute successful a mode that felt amazingly human.

The tech property declared a caller era; And astir everyone agreed connected 1 thing: Google – the institution that had fundamentally invented the modern internet, dominated however the satellite finds accusation for 2 decades and called itself an AI-first institution – had been caught sleeping.What’s ironic was that Google had the researchers, the information and the infrastructure, yet it was 2nd to a institution that astir radical did not perceive about. Google had published an world insubstantial successful 2017 that introduced the Transformer architecture that made ChatGPT imaginable successful the archetypal place. And yet present was a scrappy startup, backed by Microsoft’s $1 billion, doing what Google had seemingly been excessively cautious oregon excessively comfy to do: vessel a merchandise that showed mean radical what AI could really consciousness like.

Inside Google's offices, the interior alarm was existent and reports said that “Code Red” was declared. Co-founders Larry Page and Sergey Brin reportedly checked backmost in, and the institution accelerated the improvement of Bard, its ain AI chatbot, and began fast-tracking AI features crossed its merchandise suite. From the outside, it looked similar panic. Like a elephantine scrambling to drawback up.Google stumbled with its archetypal launch. It made Bard from scratch and launched Gemini.

Nobody talked astir astatine the clip what Google had been softly gathering for astir a decennary underneath each of it. Google became confident, and CEO Sundar Pichai gave a hint of what was brewing down the scenes.In a caller interview, Google CEO Sundar Pichai pulled backmost the curtain connected however the institution really experienced that moment, and his statement sounds thing similar a institution successful crisis.Pichai said successful an interview: It was evidently precise invert-focused successful that moment. To me, it was precise wide successful that moment, "Hey, the Overton model shifted." I felt similar the institution was built for that moment. The vertical thing, it's not an mishap oregon something. It was a precise intentful. We were successful the seventh mentation of TPUs. I retrieve it mightiness person been 2016 Google I/O wherever we announced the TPUs and spoke astir we are gathering AI information centers. This was 2016. The institution was operating successful an AI-first way. We had profoundly internalized this shift. To me, we were down successful presumption of frontier LLM models, but we had each the capabilities internally, and we had to execute to conscionable the moment. But the breathtaking portion was erstwhile I look astatine it from a afloat stack, we had the probe teams, we had the infrastructure teams, we had each the platforms.The capabilities Pichai was referring to included the probe teams, the infrastructure, the platforms. But the astir important 1 was the 1 that astir nary 1 extracurricular the institution afloat understood astatine the time: The chip.

Part I: The Chip Game

While the remainder of the tech satellite was buying Nvidia, Google was playing a antithetic crippled each along. Nvidia's GPU chips became the indispensable hardware of the modern AI epoch and they proved to beryllium the picks and shovels of the golden rush.

Demand for Nvidia’s H100 chips skyrocketed, outstripping proviso truthful dramatically that entree to them became a competitory vantage successful its ain right. As Companies queued up, prices soared and Nvidia's marketplace capitalisation crossed a trillion dollars, past 2 trillion, past concisely touched three. Jensen Huang became 1 of the astir celebrated CEOs connected the planet. Nvidia was not conscionable a spot institution anymore. It was the infrastructure furniture that the full AI manufacture was built on.

Soon, it became the archetypal institution to scope the $4 trillion marketplace cap.

Google, meanwhile, had been doing thing antithetic since 2016. TPUs, oregon Tensor Processing Units, are chips designed by Google specifically for the benignant of mathematical operations that AI models require. Unlike Nvidia's GPUs, which are general-purpose processors adapted for AI workloads, TPUs are purpose-built from the crushed up for 1 thing: moving neural networks efficiently.The archetypal procreation was announced astatine Google I/O successful 2016, astir arsenic a footnote successful a broader AI presentation. Few radical extracurricular the manufacture paid overmuch attention. But Google kept building. By the clip ChatGPT changed the satellite successful precocious 2022, Google was already connected its seventh procreation of TPUs with years of iterative development, architectural refinement and hard-won engineering acquisition that nary magnitude of wealth could simply bargain overnight.What this meant, successful applicable terms, was that erstwhile Google's astir blase AI models yet arrived successful the signifier of Gemini, Gemini Nano, Gemini Pro and Gemini Ultra, and the successive versions that followed were not conscionable capable, they were fast, close and up to date. And they ran connected infrastructure that Google owned, controlled and had been perfecting for astir a decade. The remainder of the manufacture had been gathering connected rented onshore portion Google had been softly laying its ain instauration the full time.

Part II: The Inference problem

For a while, the AI speech was dominated by 1 metric: however large is your model, and however good does it execute connected benchmarks. Training: the process of feeding tremendous amounts of information to an AI exemplary truthful that it learns patterns, relationships and reasoning capabilities, was treated arsenic the superior challenge. Moreover, each the companies competed connected the size of their grooming runs, the sophistication of their architectures, and their show connected standardised tests.

A better-trained exemplary meant a smarter AI. And a smarter AI meant winning.What the manufacture dilatory and somewhat painfully discovered is that grooming is lone fractional the problem. The different fractional is inference: the process of really moving the exemplary successful existent clip to reply a user's question.

In easier words, erstwhile you benignant thing into ChatGPT oregon Google's Gemini and person a response, that effect is being generated done inference: the exemplary is processing your input and producing an output, successful existent time, astatine scale, for perchance millions of users simultaneously.What it meant astatine that clip was that Inference was hard, computationally intensive, time-sensitive and expensive. A exemplary that produces superb answers but takes 30 seconds to make them is not utile successful a user product. Users expect responses successful seconds. The spot that enables fast, businesslike inference is truthful becomes an indispensable cog.This is wherever Google’s TPUs became a genuine competitory weapon.

Google utilized Nvidia’s GPUs for grooming but utilized TPUs, peculiarly well-suited to inference workloads, to make speedy answers. Their architecture, purpose-built for the matrix multiplication operations that underpin neural web computation, allows them to process inference requests with a velocity and ratio that general-purpose GPUs conflict to lucifer astatine scale.

Google’s models, moving connected Google’s TPUs, successful Google’s information centres, delivered responses connected Google’s products with a velocity that started turning heads.And past Google did thing that nary 1 had rather anticipated: it opened the doors. Rather than keeping its TPU infrastructure exclusively for interior use, Google began offering entree to its chips done Google Cloud, allowing outer companies, including startups, enterprises, AI labs, to rent TPU capableness for their ain workloads. A portion of hardware that had been built to springiness Google an interior vantage was present a commercialized product.

The spot had go a concern – and Google Cloud came retired to beryllium different fastest increasing vertical for Google.

Part III: The time Google frightened Nvidia

The infinitesimal that crystallised conscionable however superior the TPU menace had go arrived without overmuch warning. Reports emerged that Meta, 1 of the largest consumers of AI computing infrastructure successful the satellite and a institution that had been 1 of Nvidia's astir important customers, had signed a woody with Google to usage TPUs for definite workloads.The marketplace reacted immediately. Nvidia's stock terms dropped and billions of dollars successful marketplace capitalisation evaporated successful a azygous day. The awesome was clear: if Meta was diversifying distant from Nvidia toward Google's customized silicon, the presumption that Nvidia had a imperishable fastener connected AI infrastructure was nary longer safe.Nvidia pushed back, and it did truthful loudly. It announced successful a station connected X (formerly Twitter).“We're delighted by Google's occurrence — they've made large advances successful AI and we proceed to proviso to Google,” the institution said successful a connection that managed to dependable some gracious and dismissive astatine the aforesaid time. “NVIDIA is simply a procreation up of the manufacture — it's the lone level that runs each AI exemplary and does it everyplace computing is done. NVIDIA offers greater performance, versatility, and fungibility than ASICs, which are designed for circumstantial AI frameworks oregon functions,” it added.The notation to ASICs (Application-Specific Integrated Circuits), the class that TPUs autumn into, was pointed. Nvidia's statement was fundamentally this: yes, purpose-built chips tin beryllium precise accelerated astatine the circumstantial happening they are designed for.

But general-purpose platforms that tin tally anything, anywhere, connected immoderate framework, are yet much valuable. Versatility beats specialisation.It is simply a tenable argument. It is also, notably, the statement of a institution that felt the crushed displacement beneath it.Nvidia besides made a important strategical determination astir this period, acquiring Groq, a spot startup that had built a estimation for extraordinarily accelerated inference performance.

The acquisition was wide work arsenic a nonstop effect to the inference challenge: if the adjacent competitory battleground was not grooming but serving models rapidly and cheaply astatine scale, Nvidia wanted the champion inference hardware successful its portfolio.

Part IV: Google strikes backmost with TPU v8

When everyone thought the warfare was cooling down, Google’s reply came successful the signifier of its latest procreation of customized silicon, and it was designed to code some sides of the AI compute equation simultaneously.

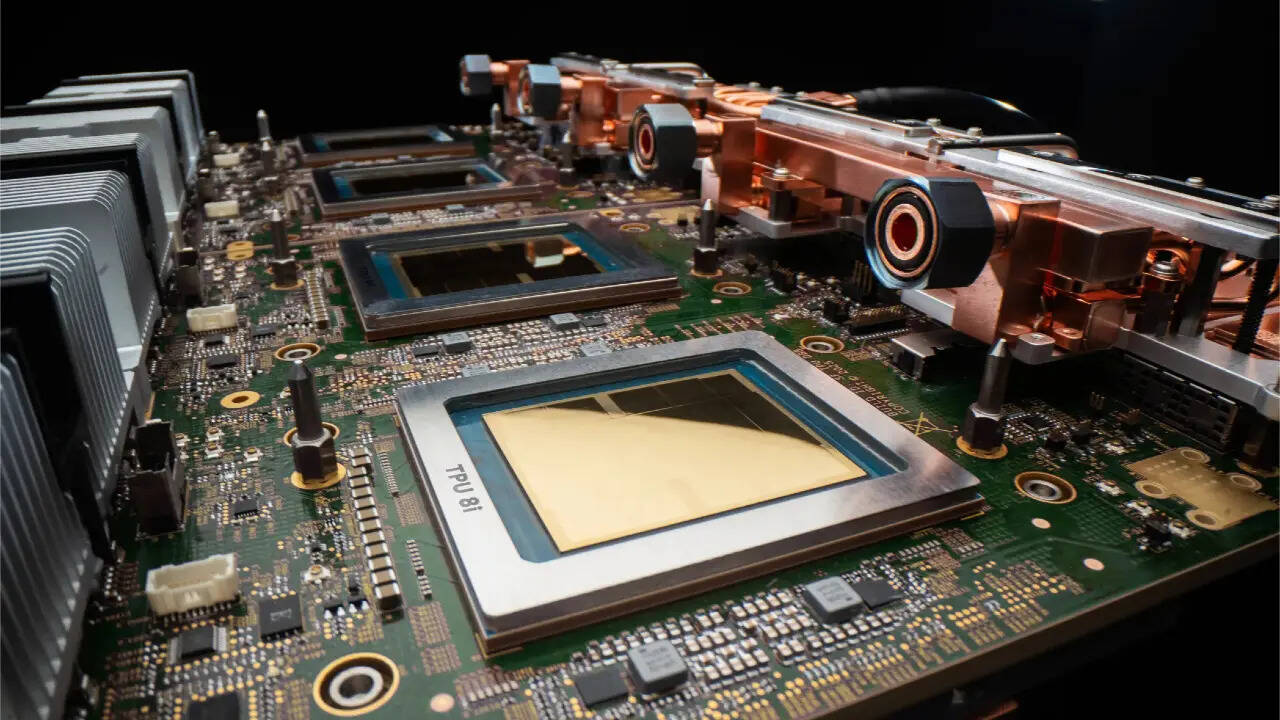

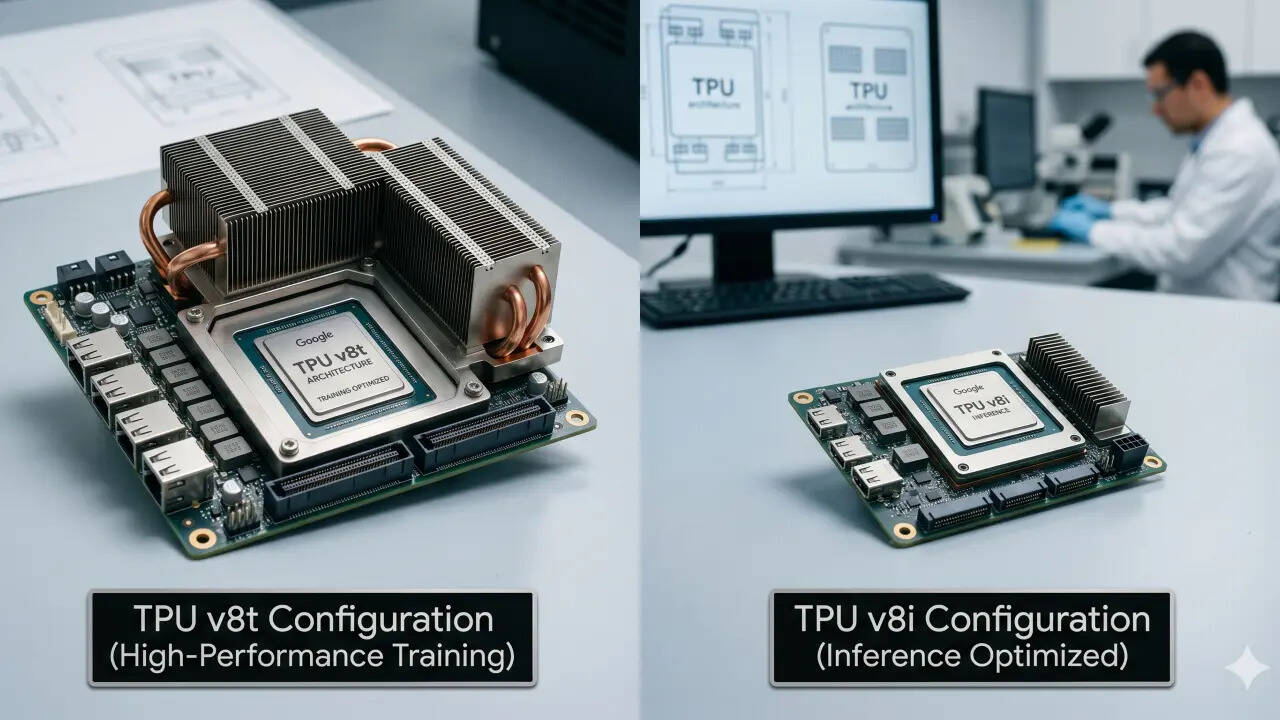

The institution announced TPU v8 successful 2 chiseled configurations, each targeting a antithetic portion of the AI workload spectrum.

The first, TPU 8t, is built for massive-scale training. These chips tin grip grooming – the benignant of enormous, months-long computation required to physique the adjacent procreation of frontier AI models. It is Google’s reply to the question of whether its customized chips tin vie with Nvidia's champion hardware erstwhile it comes to the raw, sustained powerfulness required to bid models astatine the frontier.The second, TPU 8i, is built for thing different: high-performance, low-latency agentic inference. This is the spot designed for a satellite wherever AI is not conscionable answering elemental questions but operating arsenic an autonomous agent, including readying multi-step tasks, executing actions, interacting with outer systems, and doing each of it rapidly capable that users and endeavor systems bash not announcement the delay.

Together, the 2 chips correspond thing much important than a hardware update: They correspond Google's clearest articulation yet of what it is trying to be. Not a hunt institution with an AI strategy. A full-stack AI company: 1 that owns the research, the models, the chips, the information centres, the unreality level and the user products.

Sundar Pichai's remark astir the Overton model is worthy returning to, due to the fact that it captures thing important astir what Google has been doing and what it is present saying openly.

The Overton model is simply a conception from governmental mentation that describes the scope of ideas the nationalist is consenting to see acceptable astatine immoderate fixed moment. Pichai utilized it to picture the infinitesimal ChatGPT changed what the satellite thought AI could and should do.

The model shifted. Suddenly, radical were acceptable for AI successful a mode they had not been before.Google's statement was implicit successful Pichai's words and progressively explicit successful the company's merchandise and hardware announcements: it was ne'er behind.

It was waiting for the model to open. And erstwhile it did, it had everything it needed: the research, the infrastructure, the chips and the models.What is harder to quality is wherever Google stands now. Its Gemini models are competitory with the champion successful the world; its TPU infrastructure is attracting customers that Nvidia considered its own; its unreality concern is growing, and present its chips, which are aggregate generations successful the making, built successful the years erstwhile cipher was paying attention, are present astatine the centre of 1 of the astir profound exertion competitions of the modern era.

1 hour ago

3

1 hour ago

3